Description

For the past century Fake News has become a significant problem, as exploiting news outlets and social media platforms to disseminate misinformation to influence individuals’ actions has become widespread. The negative impact of Fake News, especially within the political, economic, and social environments is increasing, emphasizing the need to detect and identify these Fake News stories in near real-time. Furthermore, the latest trend of using Artificial Intelligence (AI) to create fake videos, known as “DeepFakes” or “FakeNews 2.0”, is a fast-growing phenomenon creating major concerns. AI technology enables, basically anyone, to create a fake video that shows a person or persons performing an action at an event that never occurred. Although DeepFakes are not as prevalent and widespread as Fake News articles, they are increasing in popularity and have a much greater effect on the general population. In addition, the sophistication behind the creation of DeepFake videos increases the difficulty of identifying and detecting them, making them a much more effective and destructive tool for perpetrators.

As the usage and threat of DeepFakes and Fake News intensifies, so do efforts to develop new detection methods, resulting in the next wave of (mis)information warfare. The proliferation of technology, specifically Artificial Intelligence, allow wide scale and easy to use solutions to create synthetic media that seems very real, either in video, photos, or text. In combination with networks of fake profiles, bot networks and smart utilization of social media, the attackers have many ways to influence either the public or specific individuals. As media and technology are now being used for cyber terrorism, criminal activity, espionage and military warfare, the new age of information warfare threatens democracies worldwide, pushing governments to combat this threat with large investments.

Intelligence, Law Enforcement, and other Governmental agencies are taking active roles in tackling the threat that (mis)information warfare poses and are spending growing budgets on technology that can detect and counter Fake News and DeepFake media.

The Counter Deepfake and Counter Fake News Software Solutions Market report is the first report to cover this up and coming market, focused on detection and mitigation solutions for Fake News and DeepFakes:

1. Fake News – the deliberate creation of false reality to influence public perception.

2. DeepFake – manipulated videos or audio produced by AI, that yield fabricated images and sounds that appear to be real.

In many cases the two are used simultaneously, however each one requires different tools and methods to detect and mitigate. Currently, most of the market spending goes towards the detection and monitoring part of the Information Warfare, while a smaller portion is invested towards digital forensics, trying to trace the source, after the damage has been done.

There are two major categories of DeepFake detection tools:

1. Pattern Analysis – looking and analyzing the behavior of people in the videos, learning the patterns, from hand gestures to pauses in speech, and comparing it to real life patterns. This approach has the advantage of possibly working even if the video quality itself is essentially perfect.

2. Quality of Video Analysis – analyzing the differences between deepfakes and real videos. Most deepfake videos are created by merging individually generated frames into videos. By analyzing the essential data from the faces in individual frames of a video and then tracking them through sets of concurrent frames one is able to detect inconsistencies in the flow of the information from one frame to another. This can also be used for face audio detection.

At the end of the day, it may be that both types of DeepFake detection methods will be needed in order to mitigate this potential threat.

Fake News are disseminated via networks of fake profiles, bot networks and smart utilization of social media, therefore, most tools developed and used to detect fake news are geared towards finding, analyzing and stopping these networks and bots. These tools are a mix of OSINT and Cyber technologies.

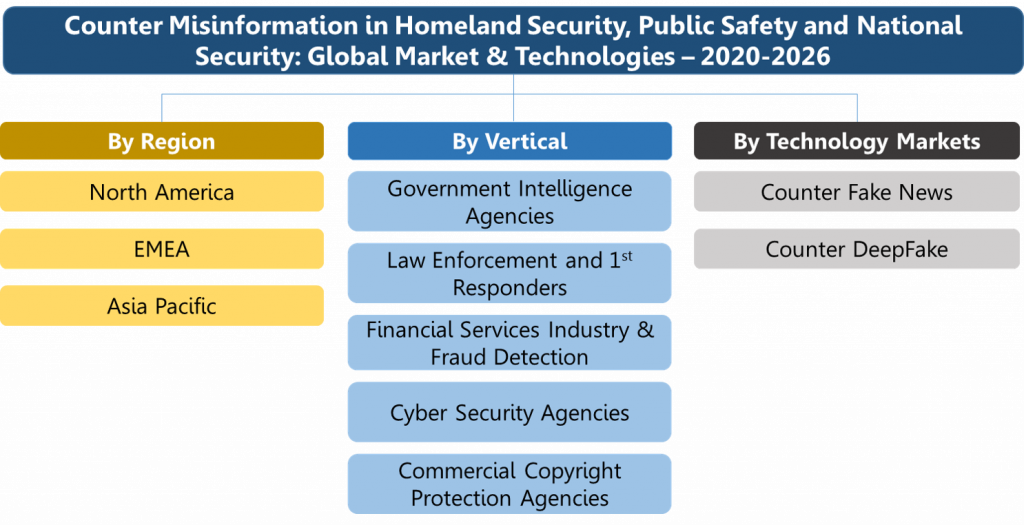

The counter DeepFake and counter Fake News software market forecast report presents for each submarket 2019 data and projects the 2020-2026 market. We address the “money trail” – each dollar spent – via the following 3 orthogonal market segments:

By Type of misinformation:

1. Fake News

2. DeepFake

By End User:

1. Police & Law Enforcement

2. Intelligence Organizations

3. Military

4. Other Government Organizations

5. Commercial Businesses

6. Consumers

By Regional Markets:

1. North America

2. Europe, Middle East & Africa (EMEA)

3. Asia Pacific

As most of the misinformation activity and content dissemination is done on social media platforms, the report covers the activity of tech giants to tackle this threat over their platforms and beyond. Facebook, Twitter, Microsoft, Youtube and other leading content platforms are investing time and money to better understand and detect deepfakes to make sure their platforms are not misused by criminals and state-owned operators. However, these efforts alone will not be enough and other institutions will have to take a more prominent role by allocating larger budgets to purchase or develop capabilities to mitigate the risk. In addition to detecting these fakes, journalists and the social media platforms also need to figure out how best to warn people about deepfakes when they are detected to minimize the damage done.

Such as in most tech heavily markets, end users are debating whether to work with an external vendor or to rely on internal developed capabilities as well as existing OSINT monitoring tools re-directed to a dedicated team. The report contains a detailed list and short profile of the companies that provide solutions for counter DeepFake and FakeNews monitoring and detection. Many of these companies are startups such as Cheq, Metafact, Cyabra, Falso Tech, Sensity, and others. In addition, large and mature corporations are also active in the market, some of them via M&A activities and other are developing solutions internally, such players include Axon, Microsoft, Facebook, Twitter and others.

These companies are leveraging several high-end technologies and methods to tackle this threat, such as:

1. AI analytics that can identify synthetic media

2. Digital authentication solutions

3. Content and social media monitoring tools (OSINT)

4. Fake profiles detectors

5. Fact checking tools

6. Related cybercrime activity

In addition to technological solutions, regulatory frameworks are also being put forth by Judicial systems across the globe as a method to deal with this threat. However, there are many issues regarding personal rights and the freedom of speech that make it hard to fight this kind of campaigns by only using the law.

Questions answered in this market report include:

1. What will be the counter deepfake and counter fake news software market size in 2019-2025? What will the relevant trends be during that time?

2. What will be the deepfake detection software market size in 2019-2025?

3. What will be the fake news detection software market size in 2019-2025?

4. Which submarkets in this field provide attractive business opportunities?

5. Which regions are expected to invest most in these capabilities?

6. Which end users are expected to invest most in these capabilities?

7. What are the market drivers and inhibitors?

8. Who are the prominent vendors in the market?

9. What type of regulations are countries implementing to fight the threats posed by deepfake and fake news activities?

Ideally, a deepfake verification tool should be available to everyone. However, this technology is in the early stages of development. Furthermore, developers need to improve the tools and protect them against hackers before releasing them broadly. Therefore, HSRC estimates this market will grow rapidly during the forecast period.